TL;DR

The UK ‘snoopers charter’ is back in the form of the Investigatory Powers Bill (IPB [pdf]). As with previous efforts it’s not just trying to provide a more robust legal framework for ongoing spying, but also trying to extend spying powers to other agencies. The police might see this as a way to solve crime more efficiently, but they risk undermining their trust relationship with the public. The worst part is what’s being outsourced to the telcos.

Background

I last wrote about communications interception (aka signals intelligence or SIGINT) on my friend Nick Selby’s Police Led Intelligence blog shortly after the initial Snowden revelations about PRISM. Since then there’s been a constant stream of fresh outpourings about the scope and scale of spying.

I’d once again recommend Richard Aldrich’s ‘GCHQ‘ for historical perspective. One point that sticks with me was that the post war spies got everything they wanted… right up until the nuclear powered spy ships[1]. Only then was the line crossed. I wish I was making this up.

The Legal Argument

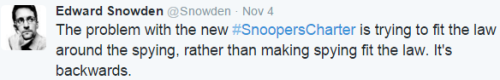

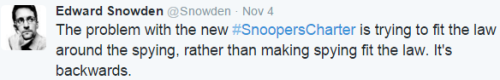

Snowden is now saying that the IPB is about making the law fit spying rather than spying fit the law.

Successive generations of the ‘snoopers charter’ appear to have been trying to do this very thing. On the other hand it doesn’t seem to matter whether the spies are acting within the law (or some twisted secret interpretation of the law) as nobody is going to jail – not the spies, and certainly not the politicians who seem so in love with what they get from the spies (or is it just the lobbying and political donations from the companies the spies spend with – it’s so hard to tell?).

The Social Contract

Citizens seem to be quite happy to pretend that the spies don’t exist so long as the spies remain happy to hide in the shadows. This (approximately) makes it quite OK for an agency like GCHQ to hoover up everybody’s (meta)data so long as the product from that gets used exclusively within the secret squirrel club. This arrangement gets shored up by making intercept evidence inadmissible in court. Things get a bit murky with ‘parallel reconstruction‘, but the point here is that regular law enforcement needs to do some spade work to dig up evidence that they can use in court.

Breaking the Social Contract

Having police who are also spies is a bad thing. This is why Germany now has some of the strongest privacy laws in the world, because half of that country lived under the prying eye of the Stasi, and nobody wants to go back there.

The British police claim that they want to ‘police by consent’, according to Peelian Principles. But they’re also claiming that they need new powers to deal with crime moving from the physical world to the virtual world.

This is where the current proposals don’t stand up to scrutiny. ‘We can’t follow somebody into a bank when the banking is online’, was part of a statement that I heard in the past week. This seems like a perfectly sound basis for police to get a warrant and tap the communications of a suspect. It seems like a flimsy excuse for a year long backlog being kept just in case somebody does something bad[2].

The Spies Aren’t Helping (Enough) and the Police Want More

The output and impact of the secret squirrel club is necessarily constrained (otherwise it stops being secret[3]). It hence becomes limited to the most serious activities – terrorism etc. Of course the (generally very senior) police recipients of the product see how helpful it can be and want more – so that they can go after a wider variety of crimes.

Policing on the Cheap?

So is this just an efficiency play… dragging the police into the 21st century where they catch people at the click of a mouse rather than going to the effort and expense of following people around in the physical world. That’s an argument that’s being made, but if that was really the case then why not go all in and make intercepts admissible as evidence?

I’ll return to the earlier point of get a warrant. In the physical world there are certain checks and balances on police behaviour where they have to ask permission before taking action. The IPB is being sold as providing a framework of checks and balances, but sadly these seem to be modelled on the (already discredited) US Foreign Intelligence Surveillance Court (FISC). Theresa May seems happy to apply the mechanisms designed for spies to regular police because we seem to have a complete muddle here over who’s doing spying and who’s doing police work.

Just add Telco

Nobody’s just handing over the keys to GCHQ to PC Plod, and this is where the trouble really starts. The spies suck up our (meta)data into their very impressive dome of concrete and steel where it’s looked after by highly vetted and dedicated professionals. The police don’t have those resources, so they need to outsource the heavy lifting of data retention. The virtual boot rubber will meet the road at British Telecom, Sky and Talk Talk.

There are probably examples of companies that care less about their customers than telcos, I just can’t think of any off the top of my head.

And then there’s the fact that Talk Talk just got hacked six ways from Sunday, apparently by a bunch of teenagers, eliciting the usual spin about advanced ‘cyber’ adversaries. Nobody trusts these people to get a phone bill right, and they’re just barely competent at moving IP packets around. It’s bad enough that we have to trust them with our data in motion, but making them look after a giant pile of it at rest is just asking for trouble.

Conclusion

We might wish for spying fitting the law rather than the law fitting the spying, but too often is seems that the actions of the ‘bad’ guys is sufficient excuse for poor behaviour by the ‘good’ guys (and in extremis this is how we generate ‘bad’ guys in the first place – a lack of transparent justice).

What our spies have been doing doesn’t seem to have been hurt too badly by having it dragged into the public eye, so it would probably be fine if the IPB was simply moving the legal boundary to allow for past and present activities (even if that does inevitably lead to new boundary pushing).

Where this all goes horribly wrong is the IPB turning our police into spies, but outsourcing the real effort to some of the most loathed companies on the planet.

Notes

[1] As the British Empire contracted so did the opportunity to have land based intercept stations in friendly territory. This created a classic ‘capability gap’, which exploits loss aversion to regain things at almost any cost. The answer apparently was to build giant floating intercept stations. Of course this stuff became overtaken by events once spy satellites went into orbit.

[2] There seems to be a curious love affair with backward causality here – the ability to explain why something went wrong after it happened. This is why in pretty much every terrorism case over the past decade we find that the agencies had half an eye on the perpetrators, but had determined that they weren’t worthy of full on attention – bigger fish to fry and all that. Backward causality invariably makes the spies look bad, though it does give them a never ending reason to plead for more resources.

[3] It’s well documented how Churchill agonised over intelligence from Ultra, and the balance between saving lives in a given operation, and tipping his hand to the Germans, which might have lost or disrupted future intelligence. The same problem exists for politicians today. It’s hard to believe that the spies don’t get to notice political party defections (e.g. Douglas Carswell or Mark Reckless) but even if the Prime Minister does get told of such things it’s hard to take action.

The DNS resolver for a VPC is always at the +2 address, so if the VPC is 172.31.0.0/16 then the DNS server will be at 172.31.0.2. Amazon and the major OS distros do a good job of folding that knowledge into VM images, so pretty much everything just works with that DNS, which will resolve any private zones in Route 53 and also resolve names for public resources on the Internet (much like an ISP’s DNS does for home/office connections).

The DNS resolver for a VPC is always at the +2 address, so if the VPC is 172.31.0.0/16 then the DNS server will be at 172.31.0.2. Amazon and the major OS distros do a good job of folding that knowledge into VM images, so pretty much everything just works with that DNS, which will resolve any private zones in Route 53 and also resolve names for public resources on the Internet (much like an ISP’s DNS does for home/office connections).