Miell’s Law and Token Budgets

TL;DR

Conway’s Law tells us that organisations create systems that mirror their communication systems. Jamie Dobson coin’s ‘Miell’s Law’ in a post about the work of our mutual friend (and his colleague) Ian Miell in his forthcoming book ‘Follow the Money‘:

Organisations that design systems are constrained to produce systems that reflect the financial structures and incentives of those organisations.

We can all imagine how that applied to organisations we know from the past. But I think it’s about to be massively amplified by how money gets converted into large language model (LLM) tokens, and how those tokens get doled out to those using them.

The free ride is over

Since the arrival of ChatGPT we’ve all been able to use LLMs and the various coding assistants and harnesses built upon them at no/low cost. Chatbot type interfaces are still generally free to use, and subscriptions have been selling the underlying tokens at (fractions of) pennies on the dollar. Many commentators have compared it to the early days of Uber, where rides were being subsidised by investor capital in order to grow market share and build a ‘winner takes all’ monopolistic network.

Despite something like $1.4Tn in infrastructure investment we find ourselves in a place where there simply isn’t sufficient supply for LLM inference to satisfy the insatiable demand. But as a wise colleague once said – ‘there’s infinite demand for free stuff’.

The first cracks showed with Anthropic changing the terms for Claude subscriptions so that they couldn’t be used for autonomous agents such as OpenClaw.

Next came Google, with ‘Changes to Gemini model access and limits‘.

But the move that’s likely to hit the hardest is GitHub CoPilot’s changes that took effect at the start of this week (Mon 1 Jun). Folk that were paying $tens for a subscription that burned $thousands in tokens are about to be hit with the full bill. There’s going to be some bill shock at the end of the month, and some very angry CFOs.

Who gets the tokens (and how many)?

Companies are reacting to the pricing changes by introducing token limits. Simon Willison has a good analysis of Uber introducing a flat cap of $1500 per tool per user (and there’s a company that knows only too well what an ‘Uber moment’ looks like).

I’m hearing reports from elsewhere that graduated limits are being introduced. Distinguished Engineers get unlimited tokens and access to the best new models. Entry level folk get a tiny budget and may also be constrained to older/cheaper/less potent models. That’s obviously going to magnify the impact of the power structure on productivity – Miell’s Law in action. Of course a Distinguished Engineer can be super productive with their awesome experience and a huge token budget on leading models. But do these orgs really want to force their early career folk (assuming they’re still hiring any) to be less productive? Have HR even had a say in this? I can imagine some spectacular fodder for future industrial tribunals.

The days of ‘tokenmaxxing’ are likely over, with Amazon shutting down its token leaderboard. Clearly they created an incentive structure that wasn’t properly aligned with what the company actually needs/wants.

Jensen Huang has stated that he wants Nvidia engineers to use AI tokens worth half their annual salary. But of course he has GPUs to sell us (or the services supplying us), which might still put him on team tokenmaxxing. I’m also left wondering if that March budget of $250k for an engineer with a $500k salary turns into a June budget of $Millions, or if even Nvidia engineers suddenly have less to work with?

What’s fixed and what’s variable (and has any of this been budgeted)?

Finance folk will often refer to fixed costs and variable costs; and headcount often lies awkwardly in the middle (depending on how easy it might be to hire and fire, which in turn can depend a lot on local labour laws).

From a Conway/Miell’s Law perspective the good old org chart reflects a whole bunch of budget that’s been allocated where the people below the apex have almost no discretion on changing things.

We now get to overlay token allocations into that org chart, and discover how much discretion is associated with that? I’d speculate that approximately zero organisations going through their FY26/27 budget planning had an accurate notion of 26H2 token costs or the allocations they’ll flow to, which means everybody is now making it up as they go along (or ‘being agile’ if you prefer).

Conclusion

AI coding assistants have been seen to boost productivity (especially for knowledgeable people who are good at articulating what they want); and that productivity boost was a ‘no brainer’ when the cost was (approximately) free. But the costs are shooting up, in part to ensure that demand is constrained to meet limit supply. That’s forcing organisation to think about how they allocate tokens, which is introducing a new dimension of financially structures and incentives. Miell’s Law might only have just been coined, but it’s going to be an important thing to consider as the post free ride budgets get figured out.

Update 9 Jun 2026 – I like this post (the Hello, World! section) where Ken Corless talks about how they’re managing token budgets at Deloitte.

Filed under: technology | Leave a Comment

Tags: AI, amazon, budget, ChatGPT, Claude, coding, Conway, Conway's law, CoPilot, finance, Gemini, google, HR, Jenson Huang, Miell, Miell's law, NVidia, tokenmaxxing, tokens, Uber

May 2026

Pupdate

It was Milo’s 5th birthday on the 12th, which meant a post about how he’s getting on.

Sadly Max has also needed to visit the vets, with (we think) back ache, which might be the dreaded Intervertebral Disc Disease (IVDD). He’s been on reduced activity, so shorter walks, but thankfully seems pretty much back to normal after some painkillers and anti-inflamatory treatment.

Tech stuff

2.5gb ethernet

Having accumulated a handful of things that have 2.5gbe ports I’ve taken the plunge and bought some newer switches to connect them. They were a little fiddly to set up, hence the VLANs on Sodola managed switches post; and I notice that newer firmware has modified that config page a little.

Of course I couldn’t stop at just the things that already had 2.5gbe, so I’ve added new interfaces to my NAS and VMware box, as (aside from my desktop) they’re the things that create the most traffic copying files back and forth. For the NAS I got a USB adapter with an RTL8156 that works with these drivers, and for the VMware box a DollaTek M.2 card (affiliate link) that works with the Realtek Network Driver for ESXi. Everything seems to be reporting around 2.35GB/s on iperf3 :)

MacBook Neo

I had a (dated at the time) MacBook during my time at Cohesive over a decade ago for when I needed a Mac, but I never got along with it. But… I’ve been impressed with the reviews of the MacBook Neo, and it would be handy to have something that can (occasionally) run Xcode. So I got an Indigo 512GB one.

Early signs are very good (despite only 8GB RAM). It’s perfectly capable of running multiple browser and Ghostty tabs, which is the main thing I need from a laptop. Battery life is amazing, and it goes for days between charges. Better integration with my iPhone, iPad and iMessage seems very useful, so overall I’m impressed :) I’m going to see how I get on with it in my travel bag instead of my ThinkPad.

The only time it seems sluggish is when using Chrome. Task switching to Chrome takes an age, as does opening a new tab. I suspect Chrome might be a massive memory hog, and provokes a bunch of swap file activity.

Scooter fettling

My Vespa started cutting out when accelerating from a standstill, and a conversation with Gemini suggested that I needed a new carburettor (and maybe bigger jets). The new carb is in, and after some trial and error it’s running with the factory jet :/ But… at least it seems to be running properly again.

Eyes

I’m done with having to administer drops multiple times a day, and my ocular pressures are back to normal – yay :) I also seem to have found the right glasses for computer work (+0.75) and reading (+1.5). The light sensitivity I was experiencing at the end of last month has gone, so I’m probably not in the market for new sunglasses. Though there are times when I feel some varifocals might be useful (even if the ‘distance’ lens is planar).

But… my most recent check identified that I’m developing a ‘secondary cataract’ (aka Posterior capsule opacification (PCO)), which is going to need YAG laser treatment in a couple of months.

Solar Diary

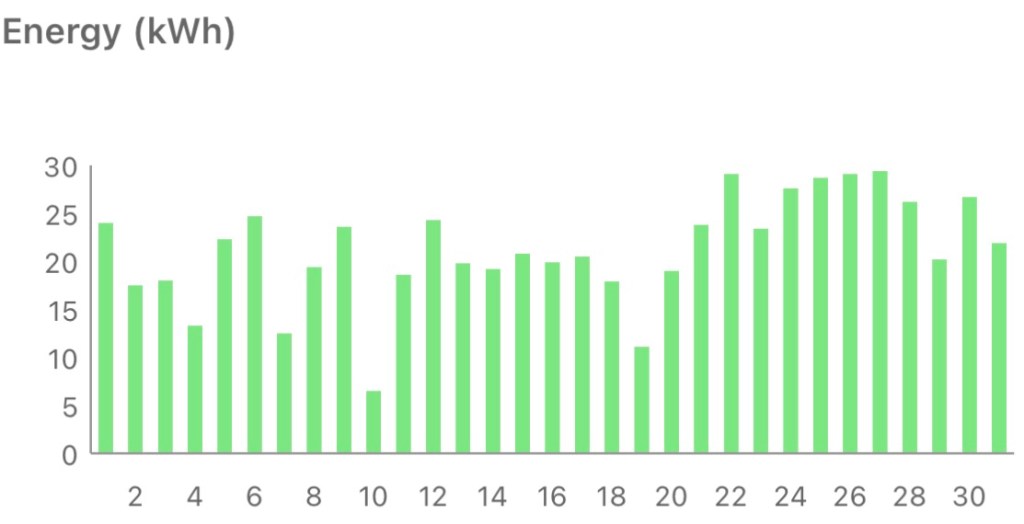

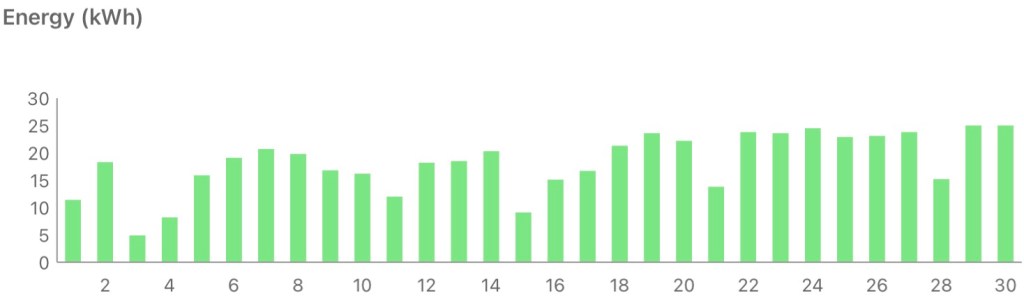

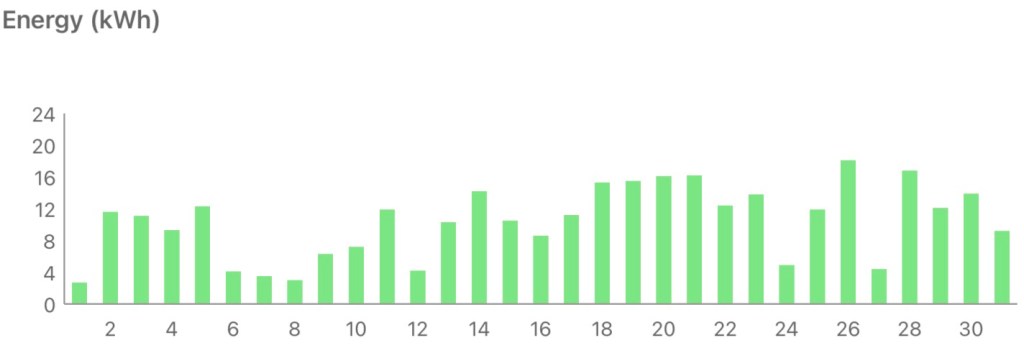

The first part of May felt like it was making up for the lack of April showers, but that gave way to a week long heatwave (and lots of sunshine). The effect of heat on production can be seen with a ~5% drop between the 22nd (29.1kWh) and 24th (27.6kWh), though subsequent days bounced back up to 29.1kWh (and then 29.4kWh) even though it remained hot.

Total production was a little short on the best May yet (2023), but better than the last couple of years.

Filed under: monthly_update, technology | Leave a Comment

Tags: 2.5gb, 2.5gbe, cataract, dachshund, ethernet, MacBook, Miniature Dachshund, Neo, networking, Realtek, solar, Vespa

Milo cancer diary part 23 – Five

Milo is five today, which he’s mostly celebrating by snoozing on the office sofa behind me.

He’s nearing the end of his fourth (modified) CHOP protocol, with just over 4 weeks and 3 more vet visits to go.

Since going into remission at the start of the year things have proceeded mostly uneventfully, which is how we like it. The one hiccup has been when the vet couldn’t get a line in for Vincristine. A little manoeuvring was needed around winter half term and Easter holidays; but that was due to vet availability rather than anything going on with Milo.

ManyPets have been prompt with payments, with everything up to date at time of writing. There should be enough to get him to the end of this protocol, but the follow up scan will likely tip things past the annual policy limit before it resets in August. Hopefully he gets another extended remission to enjoy the summer.

Past parts:

1. diagnosis and initial treatment

Filed under: MiloCancerDiary | Leave a Comment

Tags: birthday, cancer, chemo, chemotherapy, CHOP, insurance, lymphoma, Miniature Dachshund, remission

VLANs on Sodola managed switches

TL;DR

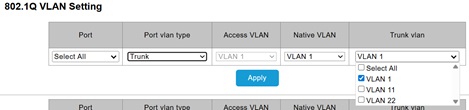

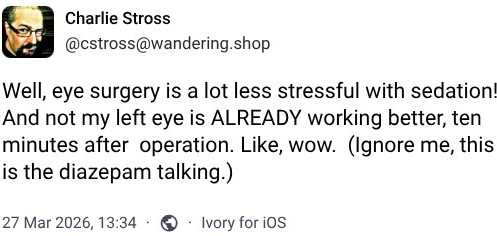

There’s an aspect to the web user interface of Sodola switches that’s far from obvious, and not documented :/ When setting up trunk ports it’s necessary to select all the VLANs that will be carried, and the default is only to select VLAN 1.

Background

I’m starting to accumulate a small selection of things with 2.5gbe ports – my GL.iNet Flint 2 router, the motherboard in my silent desktop, and the Zyxel NWA50AX Pro access point I got to complement the Flint 2 (running OpenWrt naturally). It wasn’t practical to plug everything directly into the Flint 2, so I needed some new switches.

With some building work going on in the house the physical trunk running between the coat cupboard containing the router and my office with the PC and access point was open, so I took the opportunity to drop a fiber SFP cable (affiliate link) to create a 10G network trunk. I just needed a pair of switches to light up that link, and I went for a Sodola 6 port (SL902-SWTGW124AS) and 9 port (SL902-SWTGW218AS) [affiliate links] to replace 5 port and 8 port TP-Link gigabit switches.

VLANs

My VLANs setup is fairly straightforward with the following 802.1Q tags:

- 1 – Default home network

- 11 – Guest network (mainly used for WiFi)

- 22 – Devices network

The 6 port switch needed tag 22 to emerge on the wire for the network dongle in my Growatt inverter[1]. Here’s the config I ended up with:

This wasn’t working initially because the default when creating a trunk is to select just VLAN 1, and the checkboxes to select all (or pick specific tags) wasn’t obvious to me.

Saving config

A few reviews for these switches complain about them being forgetful, and I can see why. I tripped across this myself after setting an IP on my home network subnet, and then relocating the switch and finding it had returned to default 192.168.2.1 :(

All that’s needed is to press the ‘Save configuration’ button after making changes, but that’s a break from usual expectation that changes stick.

Conclusion

With VLANs set and config saved I’m happy with these switches, and I can see myself adding more as I add more 2.5G and 10G to my network.

Filed under: networking, review | Leave a Comment

Tags: 10G, 2.5G, 6 port, 802.1Q, 9 port, config, fiber, OpenWRT, SFP, SFP+, SL902-SWTGW124AS, SL902-SWTGW218AS, Sodola, tag, trunk, UI, UX, VLAN, VLANs

April 2026

Pupdate

It’s been mostly warm and dry, so plenty of opportunities for longer walks :)

Milo is now on the final cycle of his 4th chemo protocol, and it’s proceeding OK.

Toronto

We started the month in Toronto, which was a really fun trip deserving it’s own post.

Eyes

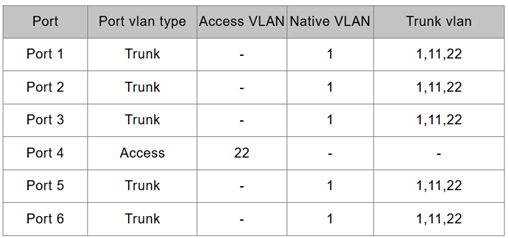

My cataracts are gone, and I now have a pair of Johnson & Johnson TECHNIS PureSee(TM) extended depth of field (EDoF) intraocular lenses (IOL). The procedure to fit them was as straightforward as I was told it would be; though I was glad that Charles Stross went a little ahead of me and provided the tip to take any sedation on offer.

It takes a while for things to heal and settle down, but I’m already pretty happy with my distance vision. Computer work and reading is another matter, and I’m still figuring out which glasses I’ll need. EDOF lenses are supposed to work down to intermediate distance, but I fear my home office monitors are just a little too close. That’s maybe not so bad, as I quite like having blue filter lenses when doing computer work. I just need something better than the cheap readers I got for reading restaurant menus whilst wearing contact lenses for skiing.

I may also be in the market for some new sunglasses (maybe even some varifocals for reading books outside), as I seem to be much more sensitive to bright light than I have been for the last 20y or so[1].

Complications

Everything seemed to be going fine until my one week check, where they picked up high intraocular pressure (glaucoma). They wouldn’t let me go home until it was normalised, which meant taking some pills, and a new eye drop regime for the next few weeks.

OpenWrt

I missed the release of OpenWrt 2025.12 at the end of last month, and it’s already at a .2 patch.

Upgrading has become really easy with ‘Attended SysUpgrade’ (ASU). I was able to go from 23.05.x on my router and WiFi access points in a matter of minutes. All the config and packages carried over without a hitch :)

Even better, the manual install of NoPorts I had on my router got automatically replaced by the csshnpd and luci-app-csshnpd packages that are now in upstream :)

Gemini Pro

I mentioned last month that I’m using Gemini CLI a fair bit, which was a good reason to take up the offer of a free year of Gemini Pro for Google Developer Experts (GDEs). But… I first had to move my GDE account from Atsign’s Workspace to my personal gmail. I’d say the migration has been worth it, as I get much better access to premium models.

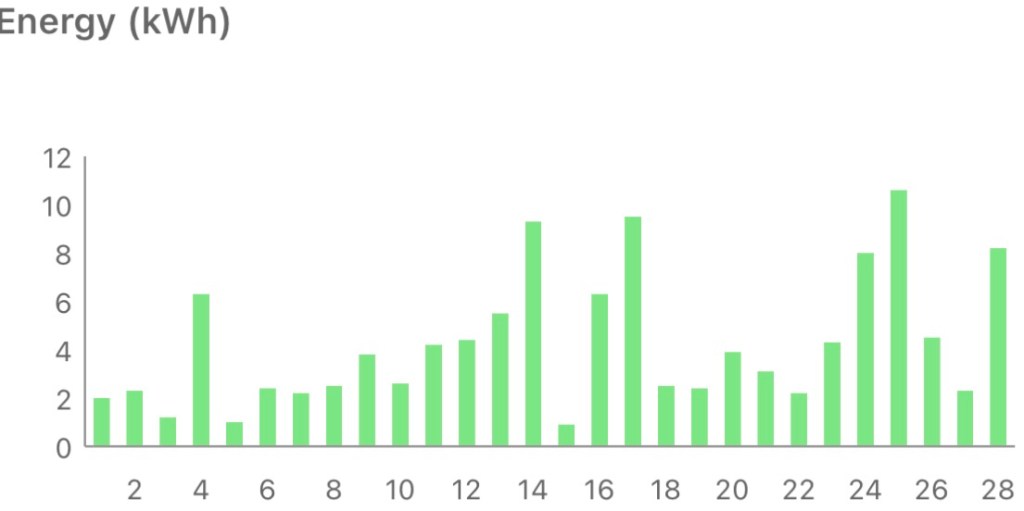

Solar Diary

The sunniest April since the system was installed :)

Is iBoost worth it?

As part of the install I got an iBoost device, which diverts excess production into the hot water tank immersion heater (rather than it going for export). It’s quite a complex bit of kit, doing pulse width modulation (PWM) in order to work over a wide power range. I paid £428.57 for mine (though it seems the newer iBoost+ is available online for a little less).

I’ve been meaning to build a payback model, but instead I got Gemini to write me a Python script. The outcome isn’t great… the whole thing is predicated on expensive gas and miserly export tariffs. Running the numbers for the present gas price and my old ‘smart export guarantee’ (SEG) rate it takes about 70y for the iBoost to pay for itself. With the SEG rate I’m presently getting I’m better off exporting the electricity and using the money to buy gas for heating water :0 That’s not very eco, but that’s where we are in a topsy turvey UK energy market :/

Note

[1] Until my 30s I’d wear sunglasses pretty much all the time outside, but then I switched to baseball caps. Seems like I might now favour both.

Filed under: monthly_update | Leave a Comment

Tags: cataract, EDoF, eye, glasses, glaucoma, iBoost, IOL, Miniature Dachshund, NoPorts, OpenWRT, pupdate, solar, surgery, Toronto

Toronto

TL;DR

Toronto is a fantastic city, with plenty to keep us entertained over our 6 night stay.

Why Toronto?

We’d originally talked about returning to Halifax Nova Scotia, which we last visited in 2000; and then $wife announced that she’d like to go somewhere new.

Getting there

We picked flights with Air Canada on their A330, choosing the extra legroom seats in 34 H&K, which is a nice setup for couples travelling together. The flight was entirely unremarkable other than having a decent IPA on offer in the shape of Hop Valley Bubble Stash.

Hotel

We picked the Riu Plaza Toronto because it seemed to be the only hotel offering inclusive breakfast (and also well reviewed). It was a fantastic base for the trip with a spacious and comfy room. The reception area was lively, but not too busy, and we always got a friendly welcome on our way through to the lifts.

Breakfast was busy each day, but we only had to wait in line for a table for more than a moment once. The buffet selection offered plenty of variety, and got our days off to a good start.

Despite taking my gym clothes I didn’t use the gym apart from as a source of water for my hydroflask.

I’d definitely choose the hotel again, and for a brand I’d not seen before Riu is on my radar as an operator that’s getting things right.

Activities

Walking around

Our first day started with a huge trek around the city, taking in many of the spots that had been recommended to us by friends who’d visited ahead of us, and local colleagues. Our route took in the lakeside, St. Lawrence Market, The Distillery, and The Well.

Other days included Graffiti Alley, exploring Chinatown and the shoreline parks to the East of the Entertainment District.

Meeting the team

A bunch of Atsign folk are based in Toronto, and our head of sales was in town for some meetings; which provided the perfect excuse for a get together. It was wonderful to meet folk in person who are normally talking heads on a Zoom (and apparently I’m taller in real life!).

Art Gallery of Ontario

One of the local tips was to visit, the Art Gallery of Ontario (AGO) on ‘First Wednesday Night Free‘, which was perfect timing as it coincided with our first full evening in town. I’d booked tickets earlier in the week, which might have been a good thing, as it seemed popular.

Niagra Falls

Niagra Falls should be a 2h20 train ride from Toronto Union station, but it turned out that the lines were blocked due to a derailment, meaning we had to switch to a bus at Burlington, which is around half way there. The journey was thus less comfortable than it might have been, but we still got there in good time.

The walk from the railway/bus station is very straightforward, as it follows the path of a disused railway line.

After wandering along the promenade by the falls we had lunch in the Skylon Tower, which provided a fantastic view.

Islands

We had a lucky break with the weather for our day visiting the Toronto Islands, as it was sunny and warm(er) (after being near freezing on the days before and after). The ferry took us to Ward’s Island, and we walked to Gibraltar Point Lighthouse before turning back.

It’s easy to see why it’s a popular spot in the summer.

Comedy

‘Consumption-friendly’ was a new euphemism for me, but we didn’t pick the club describing itself that way. Instead we headed to the Backroom Comedy Club for a set featuring Tyler Horvath. It was a fun, intimate venue; and Tyler was great, along with the line up of local acts who warmed up for him.

Monet Expo

Claude Monet: The Immersive Experience is a similar setup to the Van Gough Immersive Experience we went to in Singapore a few years ago; though it was a lot less busy, and a bit more of a schlep to get there. Being less busy meant it felt much less pressured in terms of moving along to make space for the next wave of people, which made for a relaxing trip :)

Food and drink

I’d got a bunch of craft ale recommendations from a friend visiting Toronto a few weeks previously, some of which I followed up, some we didn’t find time for. It felt like everywhere had good beer and good food. Bar Hop got our poutine fix sorted on our arrival evening. After meeting the team at Blessing in Disguise we returned for some excellent charcuterie, and more great service from Daryl. There was a bit of a line to get into Amsterdam Brewhouse after our islands trip, but it was worth the wait for the brisket (and beer). I think they were caught out a little by the nice weather, as the line was even longer as we left.

Maybe the best meal of the week was at Koh Lipe, which had been recommended by a colleague. But we also both really enjoyed Byblos.

There were of course donuts from Tim Hortons, including some of their Easter specials; though the really special ones were the maple butter…

One thing I wasn’t expecting was so much (good) local wine, and if we’d had more time a winery tour might have been fun.

Getting around

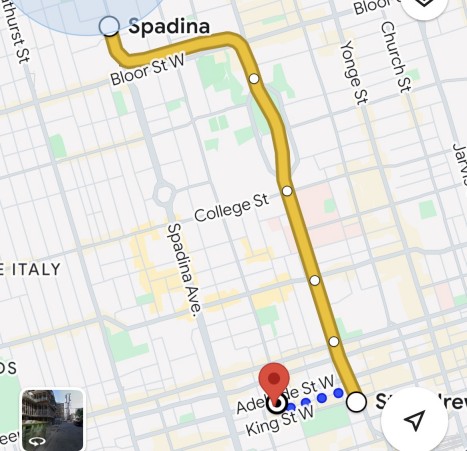

Toronto has great public transport, with an integrated payments system called Presto that works across trains, subway, trams and buses. After being initially pointed at the Presto app, I figured out we could just use contactless (inc Apple Pay).

Maps bother

Unfortunately Google Maps, Citymapper etc. don’t seem very savvy about getting around using that integrated system. We had to figure out for ourselves that we could connect from subway to tram and save on the walking a little[1].

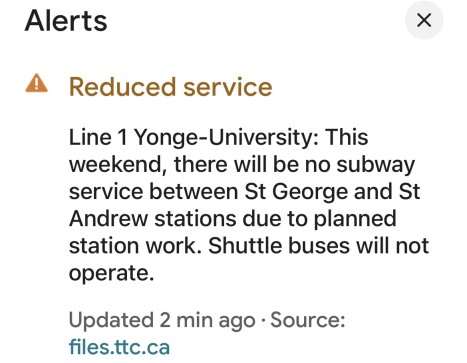

The maps apps were also terrible at dealing with closures and interruptions. There might even be a notification in the app, but the data hadn’t been factored into the picture being presented. It’s a good job we left early for the comedy club, as it turned out the 1 subway was suspended on exactly the segment we needed for the start of the journey :(

After spending time in cities where the apps correctly (re)route this was a bit jarring :/

Note

[1] This would have been handy to know on arrival, and saved us on getting a (ripoff?) taxi from Union Station to the hotel.

Filed under: travel | Leave a Comment

Tags: art, Atsign, beer, comedy, food, hotel, Islands, Monet, Niagra Falls, Riu Plaza, Toronto, travel

March 2026

Pupdate

We’ve (finally) had some warm and sunny days, so the coats have mostly been off for walks :)

Bath Half

$daughter0 is in her final year of her degree at Bath, and after getting into running last year she decided to run the Bath Half with some friends. That provided a good excuse for a weekend in Bath to support her (and of course visit some of our favourite restaurants and bars) :)

She completed the course in a (personal best) little under two hours.

AI training

Early in the month Google held an AI ‘train the trainers’ event at their London HQ. Attendees were a mixture of Google Developer Experts (GDEs), Google Developer Group (GDG) organisers and meetup organisers for various other Google platforms.

Whenever GDEs bump into each other for the first time it’s common for them to ask ‘what type are you?’ (meaning Android, Cloud, Dart/Flutter, Web or whatever), but at the community dinner somebody observed “we’ll all be AI by the end of the year”. That might not play out in practice, but I have a sense that it’s spot on in principle. Product specific knowledge isn’t the lever it used to be, versus being able to construct a good prompt.

The day itself gave me the chance to try out Gemini CLI and Antigravity. It was time well spent, as the following afternoon I got about a week’s worth of work done with Gemini CLI.

Conferences

The third week was very busy with two conferences back to back. It’s the first time I’ve ever spent a whole week in London, as the early starts and late finishes meant daily commuting wasn’t really practical.

Both QCon London 2026 then Monki Gras 2026 were (I think) the best yet :) More in the linked posts.

USB Shaver chargers

Whilst in Bath I went to use my shaver on the day of the race and nada… out of juice. Thankfully the front desk at the Doubletree was able to help me out with a shaving kit, but I was annoyed that I needed one.

The USB shaver charger I made a few years back is fine for longer trips (when I know I’ll need a recharge), but a little bulky to always have with me.

So… I went looking on Amazon and AliExpress, and found that other options are now available. I ordered a couple of these from AliExpress, though it seems similar ones are also available on Amazon (affiliate link) for a few pennies more, but faster shipping. They’re small enough that I can have one in each of my travel packs (UK/EU and US), and I already have the USB C adaptors there. On reflection I may only need one, which can live in the shaver case.

Cataracts

I’ve been back for more measurements and consultation, and my operation is booked in for next month.

Solar Diary

Not the best March so far, but one of the better ones.

Filed under: monthly_update | Leave a Comment

Tags: AI, Bath, cataract, conference, conferences, dachshund, GDE, half marathon, Miniature Dachshund, Monki Gras, pupdate, QCon, solar, USB-C

QCon London 2026

TL;DR

QCon is one of my favourite events, and I’ve been to a lot of them over the years. 2026 was the best yet, so kudos to the programme committee and C4media organisers. The most fun bit was hosting the security track, where I got to run a mini security conference within the conference, with my dream line up of speakers. I loved it, and I hope everybody else who joined us across the day also enjoyed it.

20 Years of QCon

This year’s T-shirt proclaimed ’20th anniversary’, and ‘Est. 2006’, but that’s stretching things a little. Planning might have started in 2006 (and the announcement is still live on InfoQ, along with the PDF brochure), but the first QCon was in 2007. I should know, I was there as a speaker, co-presenting with Craig Heimark. It’s amazing to look back to that first instance and the line up of industry legends like Cameron Purdy, Martin Fowler, Rod Johnson and Werner Vogels.

There’s also not been 20 instances, as (like so many other in person events) things ground to a halt in 2021 due to COVID.

I’ve personally been at (or involved in[1]) 15 or the 19 instances.

Keynotes

All four keynotes this year hit the mark, and Laura Savino’s ‘Learning Out Loud’ absolutely smashed it – maybe the best keynote I’ve seen. Witty, engaging, thought provoking, relatable and something that spurs you to take action – everything that a keynote should be.

Track talks

I pretty much filled my schedule with talks on Monday and Wednesday (when I wasn’t hosting the security track). With only one exception the talks were good. I particularly enjoyed Hannah Foxwell (who’s always great) ‘The Reinvention of the Dev Team‘ (InfoQ: AI Agents Write Your Code. What’s Left For Humans?) and Christine Lemmer-Webber with David Thompson ‘Spritely: Infrastructure for the Future of the Internet‘[2].

Security track

Software Security & Risk Management if I must give the track its full title. I was delighted when Werner Schuster reached out to ask me to host the track, and almost immediately I pulled together a dream list of speakers. The programme committee gave me the green light, and everybody said yes :) [3] So this was very much the security track that I wanted to attend.

The topic and speakers were obviously popular, as we were in the ‘Mountbatten’ room on the 6th floor of the QEII conference centre for most of the day, which is a BIG room.

Sarah Wells got us of to a great start with ‘Why Governance Matters: The Key to Reducing Risk Without Slowing Down‘, and it was pleasing to see it listed as one of the top rated Tuesday talks during the Wednesday intros.

Alex Zenla followed with a Minecraft based explanation of ‘Building on Bedrock: A Security Philosophy from Bootloader to Runtime‘.

Viktor Petersson (I think) pulled in the largest audience for ‘From Chaos to Clarity: Modern SBOM Practices That Actually Work‘ (InfoQ writeup). I guess my scary point about EU Cyber Resilience Act (CRA) requirements might have caught some attention.

We then had an unconference session, which broke into two groups talking over a variety of issues that had been chosen and voted on by attendees.

Wrapping things up in the slightly smaller Windsor room on the 5th floor we had Andrew Martin talking about ‘Exploding GPUs‘ then David Chisnall on ‘Adopting Memory-Safety and Fine-Grained Compartmentalisation With CHERI‘.

You might notice that there’s no ‘AI’ in any of those titles. That didn’t mean that we weren’t talking about the industry’s hottest topic, just that it didn’t need to be centre stage.

As I was leaving the venue somebody looked at my badge and commented ‘you must be tired’, but in fact that wasn’t true at all. It had been a really energising day, and I was looking forward to more at the speaker’s dinner.

Networking

One of the first people I saw at the venue was Alex Zenla, and it was great to hang out with her for the many sessions we’d chosen in common. It was also good to spend time with Viktor, and there was more to come at Monkigras. But there was plenty of ‘hallway track’ providing opportunities to catch up with friends and meet new folk. The venue for the speaker’s dinner seemed to work well this year – definitely less wobbly than the previous one[4]. Finally it was wonderful to join the crew and InfoQ Editors at Flight Club for the wrap up event. I’ve not been to a dedicated darts venue before, and it was a lot of fun.

Conclusion

I know from past events that QCon has a culture of continuous improvement, and that really showed this time, as I’m pretty sure 2026 was the best yet. Hopefully I get to be involved in 2027, and it’s even better…

Notes

[1] QCon 2025 collided with the Easter holidays, so I missed attending in person even though I’d been on the programme committee.

[2] I’m linking to the InfoQ write ups of the talks, as it will be some months before the videos and transcripts are released.

[3] Sadly Liz Rice had to drop out shortly before the event, but it was great that Andrew Martin could step in.

[4] Recent past speaker’s dinners have been at the Tattershall Castle (aka ‘The General Belgrano’ to my Navy pals working in MoD Main Building), a ‘pub’ on a boat moored on the Thames near Whitehall. As it’s afloat it can bob around a bit as other traffic passes by on the river.

Filed under: security, technology | Leave a Comment

Tags: conference, InfoQ, QCon, security

Monki Gras 2026

TL;DR

2026 was the best Monki Gras so far with a theme of ‘prepping craft’. A room full of techies, and a great gathering of friends, but not really a tech conference. It’s transcended tech, and become the place where the talks are about more important stuff.

Monki What?

Monki Gras is a London based tech conference organised by RedMonk founder James Governor. It’s essentially the UK version of Monktoberfest, which is also an event about the craft of software and the craft of beer.

Monki Gras has been going since 2012, took a break from 2020-2023, and has been back since 2024. It used to be at the end of the week before FOSDEM (to catch international travellers on their way through London), and more recently has been aligned to Kubecon Europe. I’m among a handful of people who’ve been to them all.

Resilience

Given the line up of speakers one might expect talks about fault tolerant architectures, and observability, and chaos engineering. If you want those talks then catch the same speakers anywhere else that they ply their trade.

What we got instead were stories of personal resilience, building a resilient career, and how to survive in the hostile political and economic climate we find ourselves in.

The most impactful talks were on the topic of trans rights, or rather how to survive when those rights are under constant attack by bigots waging their ‘culture wars’. Trans rights are human rights, and we must all stand firm against their erosion.

Hazel Weakly’s talk hit especially hard. At the end I turned to Paul sat beside me and commented, “and that’s why I don’t speak at Monki Gras, because who wants to follow that!”[1,2].

Gathering of the clan

Every break was a chance to chat with friends (and meet new folk), and Monki Gras has become ‘the room where things happen’, or at least the room where things get started. Some of the most impactful things I’ve been involved in started as Monki Gras conversations (e.g. DXC Online DevOps Dojo). So I have high hopes for where some of this year’s chats will lead…

Code Your Future

I’d barely set foot into the venue when James introduced me to Elena Barker from Code Your Future to enlist me as a mentor for the folk coming along under the auspices of the diversity & inclusion programme. It was a real pleasure to meet some of the participants and talk about how we’re all on a learning journey. Working with early career folk has been one of the highlights of Atsign over the past five years, so this was a chance to chat with some similarly talented and enthusiastic people. Elena has a post on LinkedIn about it.

Beer

Rob surpassed himself with this year’s selection serving up a couple of DIPAs that I gave 5* and 4.75* on Untappd[3].

It was also good to see some low/no alcohol choices, with ‘small’ beer on offer along with (one of my faves) Bristol Beer Factory “Clear Head”.

Wine

Wine featured much more prominently than previous years, with a wide selection of boxed wines from Bobo. I like the concept, but I didn’t spend any of my units on trying any. Maybe I should have tried some sips.

Food

Monki Gras has always had great food, and this year was no exception. What did seem to be different was less queuing. The street food vendors were well prepped, and able to deal with the onslaughts.

There was the now obligatory ‘cheese mountain’ :)

Hotel

There isn’t an official conference hotel, and the places listed on the website are all a bit spendy. When I looked this year, the Hub by Premier Inn Shoreditch popped up. A 10m walk from the venue, and pennies over £60 for the night – I had no hesitation booking.

As things worked out I ended up staying there last month with my wife, so I already knew it would be good this time around. The smaller ‘pod’ style room was plenty for my short stay, and I really appreciated the speed and simplicity of the automated check-in process.

I’ve skipped over a LOT

I didn’t take photos (of anything except beer for Untappd), I didn’t take detailed notes. Others did.

#monkigras on Bluesky seemed to be where the live action took place.

And there have been some blog posts, from Dave Letorey and Alex Chan.

Notes

[1] Almost every conference I go to is as a speaker, exhibitor, organiser or whatever. Very rarely just a regular attendee. I decided some time ago that I would not speak at Monki Gras, as I don’t want to ‘sing for my supper’, I’d rather just enjoy the event (even if I have to buy a ticket from my own pocket and take time off work).

[2] James did an excellent job of providing padding between Hazel’s talk and Daniel’s that followed.

[3] Untappd featured in the very first Monki Gras, and I signed up straight away, but it was a (now regrettable) few years before I became a regular user in order to track what I like.

[4] I’m sure when Rob showed me his list this was down as PuTTY, like the terminal emulator, though the can clearly has another T.

Filed under: beer, culture, did_do_better, politics | 1 Comment

Tags: beer, food, Monki Gras, Monkigras, resilience, wine

February 2026

Pupdate

It’s been a pretty dank February, so the coats have mostly stayed on for walks. But the boys have been enjoying their usual doggy mischief.

Milo is now half way through his 4th chemo protocol, and the second half has previous been easier as the pace slows down to vet visits every two weeks.

Cataracts

On the second day in Les Arcs I noticed that my vision wasn’t right, initially thinking I’d not put my right contact lens in correctly (the only time I wear lenses these days is for skiing).

After some fussing with lenses and staring at the hotel sign opposite my room I figured out that I could (sort of) fix things up with a stronger lens (an old prescription left lens), but there was definitely something wrong, and I booked the first appointment I could get for an eye test on my return.

Before I even got in front of an eye chart, the machines confirmed something was wrong. My right eye correction had jumped from -1.50 to -4.75 :0 It didn’t take long for the optician to see what was wrong, a cataract; and she referred me for specialist treatment. In many ways this was a comforting diagnosis, as of all the things that could be wrong it’s something that’s relatively straightforward to fix.

Three weeks later and I was at the optometrist for pre-op tests on the NHS path; but I’m now waiting for a private consultation at the end of next month, as I’d like a new lens that corrects my distance vision and astigmatism. After wearing glasses and contact lenses since I was 10 I might be free of them.

Meanwhile I’m wearing a contact lens and my varifocals.

Shingrix Pt.2

When I got my first Shingrix vaccine back in December the pharmacist warned me that it would kick my butt, and she was right. This time around I was told things would be easier, but they weren’t. If anything the aches etc. were even worse. Hopefully it’s all worth it to reduce my risk of dementia.

Protest

I’ve been going along pretty regularly to monthly meetings of my local Humanists group. A busy meeting might be a dozen people, so I wasn’t at all expecting what happened at our meeting on ‘Asylum and immigration: a compassionate, informed humanist approach‘.

Perhaps having our local MP Alison Bennett as a speaker should have tipped me off; but I was unaware of the building drama until some dinner guests the night before said “see you in the morning, we’ll be there at the counter protest”. I guess that’s what keeping off a diet of toxic social media does for you :)

I wish I’d taken a photo when I got there, but it was bucketing with rain, and I just wanted to get inside. There was a thin rabble of ‘stop the boats‘ protesters chanting their slogans on the outside of a police line with around 15 officers. Inside the line was the much larger counter protest group, including my friends. Beyond that the venue was pretty much at capacity, with hundreds of folk who’d come along. The speakers were all excellent, and it was good to meet some new folk as I spoke to those sat nearby.

It will be interesting to see if our ranks swell at the next meeting on the much less controversial topic of ‘Exploring our Humanist heritage’.

British Museum

I’ve been to the Natural History Museum and Science Museum more times than I can remember (starting back at my first trip to London when I was 7), but I’ve never been to the British Museum. We decided to do something about that during $wife’s half term break, which provided a good excuse for a day up in ‘Town’.

“Why do we have to be there at 2pm?”… ‘that’s when I booked the tickets for’. “I thought it was free?”… ‘it is, but you can reserve an arrival time (and they strongly encourage a ‘donation’).’ I was glad we had booked a spot, the line of people who’d just shown up without doing that was enormous, and not moving very fast. We went to the tent as directed, did our audience participation security theatre, and got inside in a matter of minutes.

I wish I could say it was great to see the Rosetta Stone, but the crowds made it impossible to see the business side of it. The crowds were less of an issue for the Elgin Marbles, as there’s just so much more of them. Though far too many came with signs to the effect of ‘missing head is in Athens’. Perhaps more impressive were some of the Assyrian exhibits; but I think we both came away feeling that none of that stuff belongs in London, and we should give it all back.

As we explored the further reaches it became less busy, but also more mundane. Things like those we’ve seen in many other museums in many different places. I was glad to have checked it off the list, but I don’t think I’ll be hurrying back.

Solar diary

A dank month for dog walks also meant a dark month for solar production – the worst February yet :(

Clay Hunt VR

In previous posts I’ve been dubious about whether practice in Clay Hunt VR carries over to an improvement in real world clays shooting. Now I’m more persuaded, having put in some of the best rounds of my life (despite the cataract). I’m finding that I have better focus on the target, and more instinctive aim.

After the Shingrix vaccination I woke one morning to a very painful right arm (possibly ‘frozen shoulder‘ though also maybe just lying awkwardly). This made shouldering the VR gun an exercise that I didn’t want to repeat. Fine for a quick round of skeet, but too tiring for anything else. So I did a game of ‘Duck Hunt’ purely shooting from the hip. As the saying goes… “Not great, not terrible”. I could probably do better with more practice, but thankfully the arm was back to normal after a couple of days :) The point however is that with unlimited free ammo you can practice stuff that would be reckless in real life.

Filed under: monthly_update | Leave a Comment

Tags: British Museum, cataract, clays, dachshund, humanist, Miniature Dachshund, Shingrix, shooting, solar, vaccine, vr